The Top 10 SEO Bet Just Lost Half Its Value, and Almost Nobody’s Adjusting

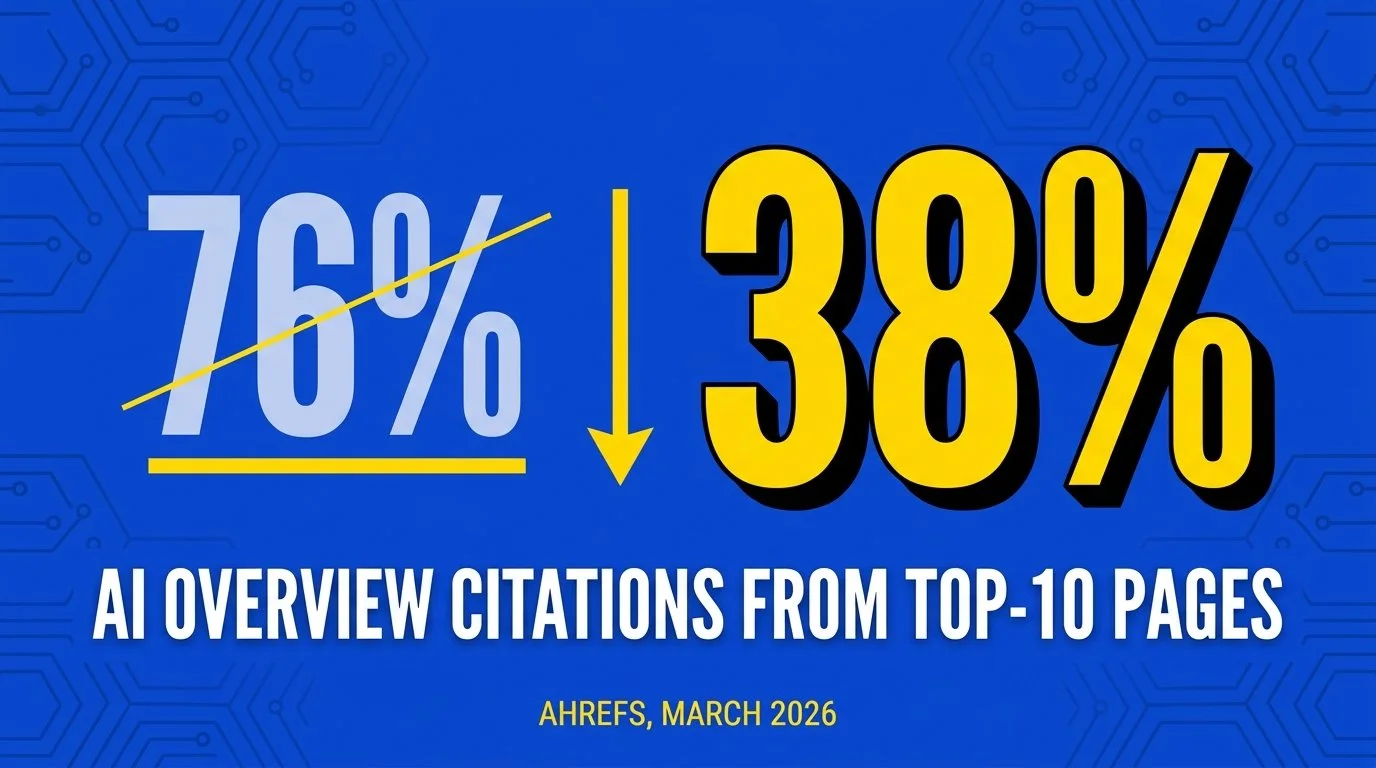

In March, Ahrefs published an updated study of 863,000 keyword SERPs and roughly 4 million AI Overview URLs. The headline finding? Only 38% of pages cited in Google’s AI Overviews also appeared in the top 10 organic results for that same query. Seven months earlier, the same study had pegged that overlap at 76%.

A separate BrightEdge analysis put the overlap even lower, somewhere around 17%. Methodology differs across the studies, so I wouldn’t bet the house on any single percentage, but the studies all point the same direction, which is what I care about. A drop of that size in seven months is not a normal SEO fluctuation; that’s the kind of move you’d expect over two or three years if at all, and most marketing teams I talk to are still optimizing like pre-Covid times. Weren’t those the days?

The reason I’ve been chasing this data is that my book is about why AI recommends some brands and ignores others, and I’d rather not write the next edition with a bad model of how citation works. So, I’ve been pulling on the thread, and I think the picture that’s emerging is more interesting than the headline figure suggests.

What changed?

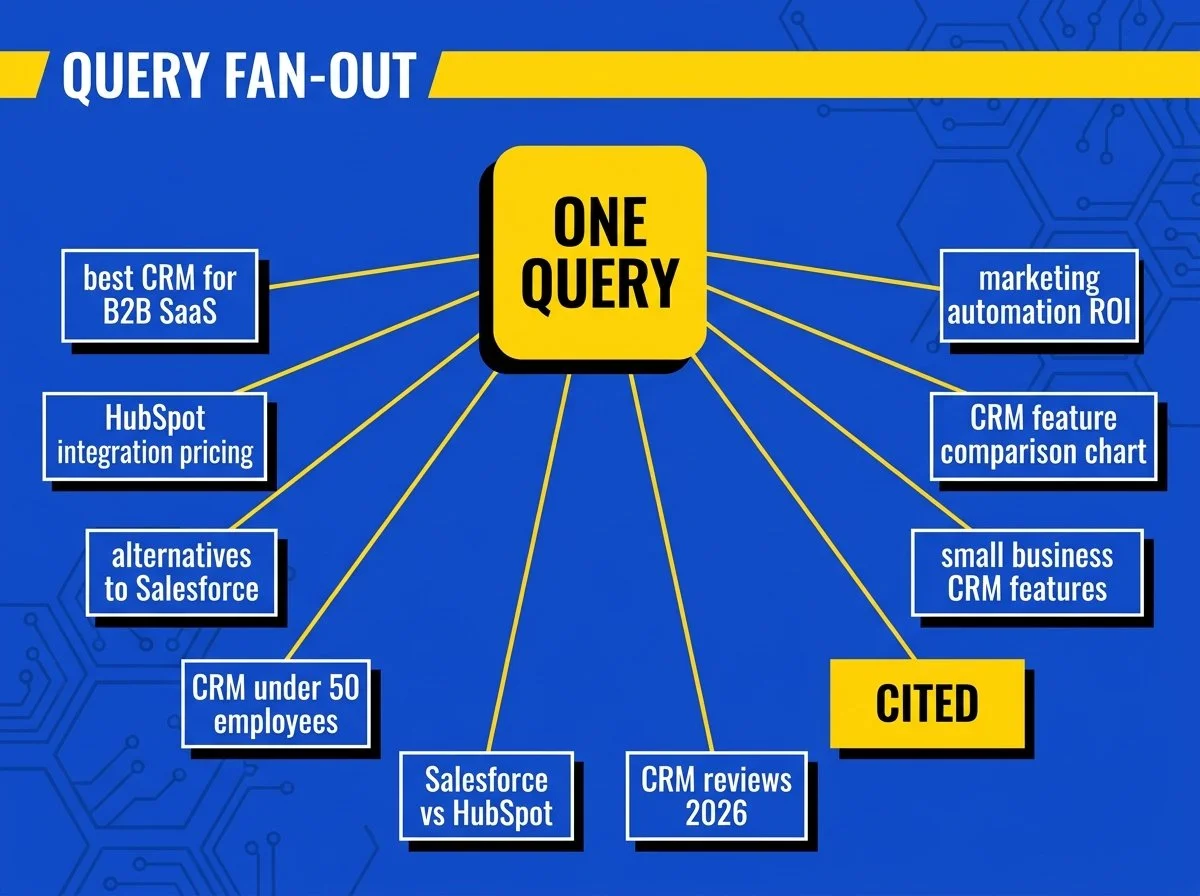

query fan-out went from theory to default

The mechanism behind the shift is a thing called query fan-out, which Google’s been describing in patents for years. The formal term in patent US11663201B2 is “query variant generation,” but everyone in our world calls it fan-out. When you type a question into AI Overviews, AI Mode, ChatGPT Search, or Perplexity, the system doesn’t run your query as written. Instead, it expands your one question into a bunch of related sub-queries, runs them in parallel, and synthesizes an answer from the pages that show up across the whole spread.

This was already happening in 2024, but it wasn’t dominant. Google’s January 27, 2026, upgrade of AI Overviews to Gemini 3, as Ahrefs noted in their analysis, appears to have made fan-out the default behavior rather than the exception. A separate analysis of more than 72,000 AI-generated queries by 85SIXTY found that a single ChatGPT or Gemini prompt now triggers eight to ten parallel sub-queries before producing an answer, and 95% of those fan-out phrases have zero monthly search volume. They don’t exist in any keyword tool you own.

Most SEO advice still treats this as a wrinkle rather than the structural shift I think it is. If a single user prompt produces ten sub-queries, and the AI is pulling citations from the pages that win across that whole spread, ranking #1 for the user’s literal phrase only buys you exposure to one out of ten possible source pools. You can be the top result for “best CRM for B2B SaaS” and still lose the citation to a page ranking #34 for “CRM with HubSpot integration for under 50 employees” because your competitor’s page covered the sub-query and yours didn’t.

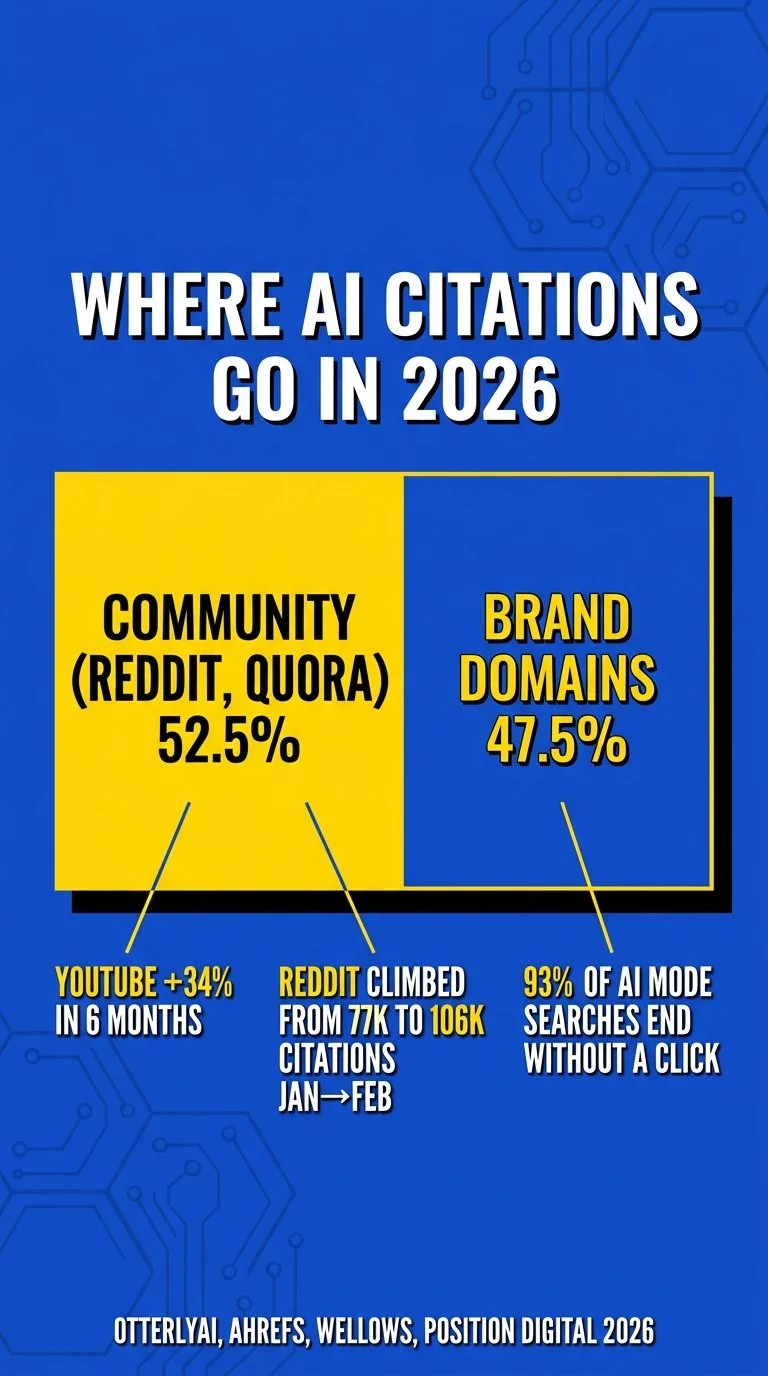

The buying audience is downstream of the citation now, not the rank

Most coverage of these numbers stops at “rankings still matter, but less,” which doesn’t tell you much about what to do. What the data tells me is that the path from query to revenue has gotten longer and more diffuse. Around 93% of AI Mode searches now end without a click, per Position Digital’s compilation of 150-plus AI SEO statistics, and AI Overviews have cut click-through rate to top-ranking organic pages by 58%. That click has been redistributed across at least three places I can identify. It hasn’t just simply disappeared. A chunk of it goes to AI answers that don’t cite anybody, and a growing share goes to YouTube, which according to Ahrefs is now the most-cited domain in AI Overviews and grew its citation share by 34% in the last six months. The rest is going to community platforms; OtterlyAI’s analysis of more than a million AI citations from January and February 2026 found Reddit and Quora capturing 52.5% of AI citations, edging out brand domains.

If you’re trying to drive revenue rather than just traffic, the practical issue here is that your prospect’s mental model of you now gets shaped before they ever land on your site. They asked an AI a question and got back an answer that may or may not have included you. If you weren’t in the citation set, you didn’t enter their consideration, and most of the dashboards marketing teams are running weren’t built to measure any of that.

I’ve been writing about this in Explainable and presented it for the ANA’s Commerce Marketing and Digital & Social Committee earlier this month, and the pattern I keep running into is that marketing leaders mostly know about the citation problem in the abstract but very few have actually run the audit on their own brand. They’re aware in the way you’re aware of a noise from your engine, where you know you should probably get it checked out and you keep driving (or just turn the music up louder so you can’t hear it). That awareness gap is where competitors are taking share that you’re missing out on.

A practical playbook for the rest of 2026

There’s a lot of bad GEO advice circulating right now, much of it written by people who started thinking about this in November. Here’s what I’d lean on, with the sources, because every recommendation below has data behind it specific to 2026.

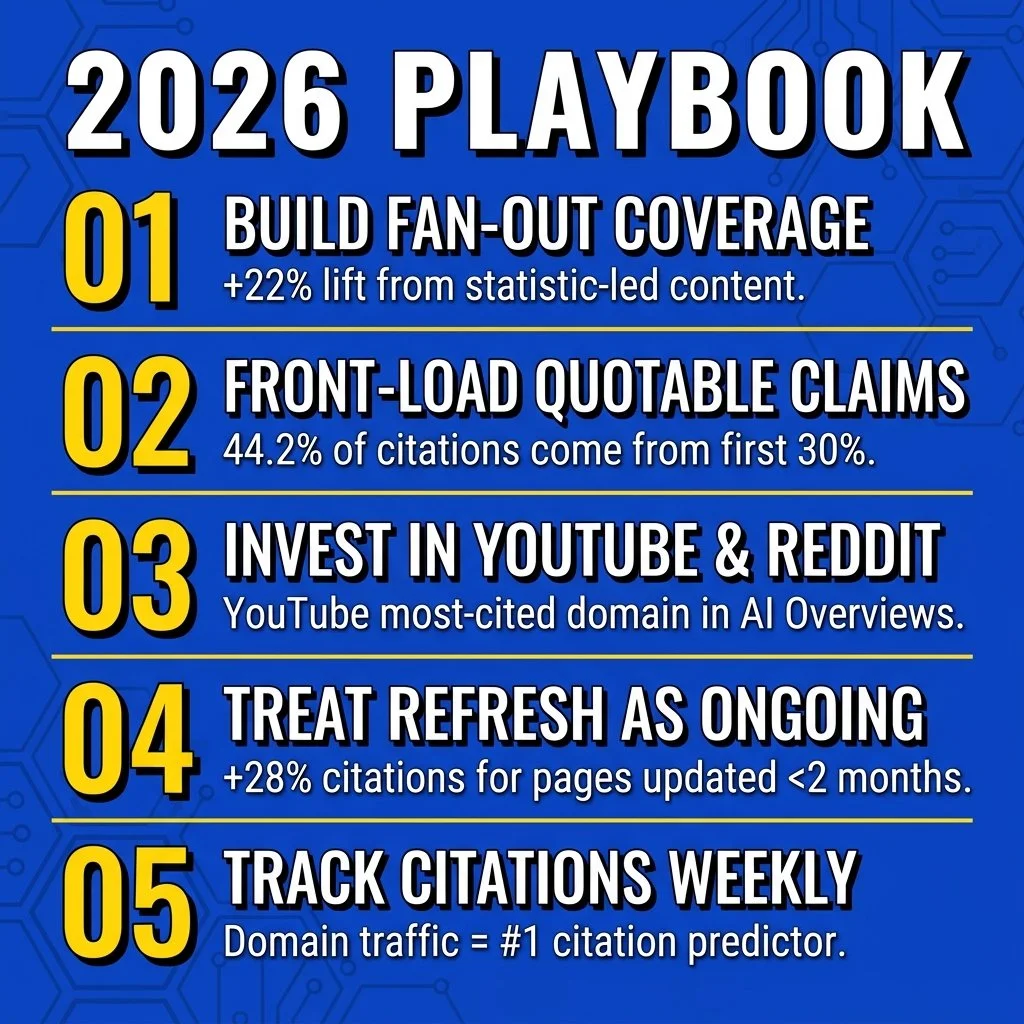

Build coverage across the whole fan-out tree of a primary topic

The Princeton GEO study referenced in Zen Media’s recent analysis found that statistic-led content earns a 22% visibility lift in AI answers compared with non-statistical content, and 93.67% of Google AI Overview citations include at least one top-10 organic result. Take those two findings together and you get a working principle. Baseline organic health is the entry ticket, and what differentiates winners now is whether their content cluster covers the comparison queries, pricing queries, “alternatives to” queries, and the implicit follow-ups that show up in fan-out. The work I’d suggest is unsexy. Pick a primary topic, sit down and write out 15 sub-questions a real buyer would ask after it, and make sure something on your site addresses each one with an extractable, fact-dense answer near the top.

Front-load your most quotable claims in the first 30% of the page

Kevin Indig’s analysis of 1.2 million verified ChatGPT citations, summarized by Radyant, found that 44.2% of all ChatGPT citations come from the first 30% of the content on a page. The model is reading the top of your page and looking for the highest “information gain” sentence in each section. So if your intro is throat-clearing, you’re handing the citation to whoever’s first paragraph isn’t throat-clearing. State the most definitive, data-backed claim you can defend, give it a number and a source, and put it before the first scroll.

Pay attention to YouTube and Reddit, even if they aren’t your “channel”

YouTube accounts for 5.6% of all AI Overview citations and 18.2% of citations that come from outside the top 100, per Ahrefs. Reddit has been growing its citation share month over month across every AI surface tracked. Wellows’ study of 350,000-plus social citations showed Reddit citations climbing from 77,111 in January 2026 to 105,967 in February even as overall query volume in their dataset declined, meaning each query is now pulling more Reddit citations than the same query would have a month earlier. The implication is awkward for a lot of brands. You don’t have to become a YouTuber, but a small library of useful 8-to-12-minute videos with clean transcripts and accurate descriptions will probably outperform another blog post on the same topic for AI visibility, and showing up in three or four relevant subreddits as an actually useful contributor is now a citation pipeline whether you wanted it to be or not.

Treat content refresh as ongoing work rather than a quarterly exercise

Ahrefs’ analysis of 17 million citations across seven AI platforms found that pages updated within the last two months earn roughly 28% more citations than older content. The models are reading your “last updated” timestamps and weighting freshness aggressively. A page that hasn’t been touched in 14 months is being treated as decaying signal even if the underlying information is still accurate, which is annoying but seems to be where we are.

Track citations, not just rankings

This is the super boring infrastructure point nobody wants to budget for, yet I really think that it’s the one I’d put money on. This will separate teams that multiply an advantage in 2026 from teams that spend Q4 trying to figure out where their pipeline went. Tools like Profound, Otterly, AVOS by Zen Media, and Ahrefs Brand Radar can give you weekly visibility on which prompts cite you, which cite competitors, and which cite nobody from your category. And of course, don’t forget about my FREE tool that you can use right now to check for SEO, AEO, and GEO scoring. Per SE Ranking’s December 2025 study of 2.3 million pages across 295,485 domains, domain traffic is the single strongest predictor of AI citation, with high-traffic sites earning 3x more citations than low-traffic ones, so citation share lags traffic by a few months. That makes it a leading indicator of where your traffic is heading well before your traffic dashboard sees it.

Where I think conventional wisdom is wrong

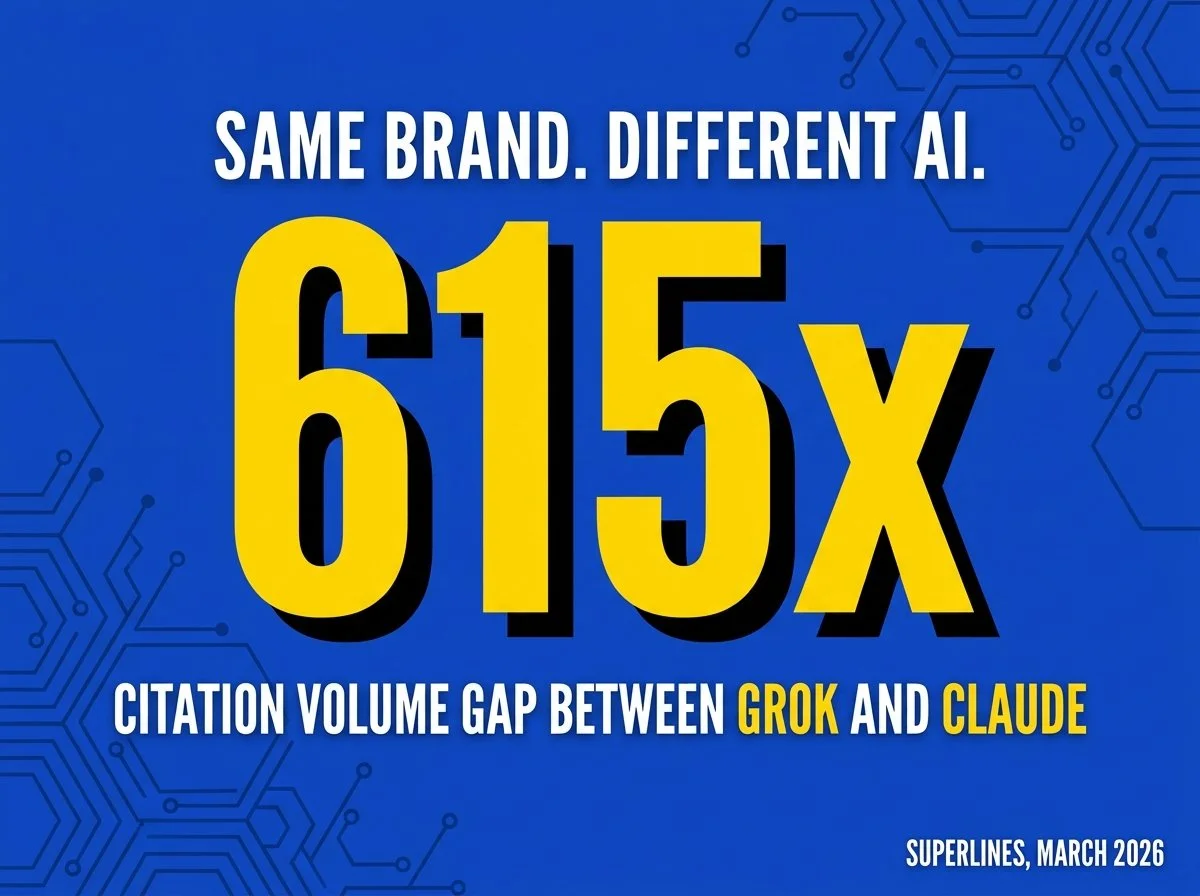

The figure that sticks with me from all of this is the 615x. According to Superlines’ March 2026 data, the same brand can see citation volumes differ by 615 times between Grok and Claude. Two AI surfaces, the same content, three orders of magnitude apart. A lot of the advice circulating right now talks about “AI optimization” as a single discipline you can do once and check off, and the data is hard to square with that. Each platform’s citation behavior is different enough that you have to measure them separately or you’re guessing.

The other place I see smart teams making a mistake is treating GEO as a replacement for SEO, which it’s not. The 93.67% figure from Princeton, where AI Overview citations almost always include at least one top-10 organic result, is the floor under everything else in this conversation. If your fundamentals are weak, no amount of fan-out coverage is going to rescue you, and the orgs that are winning right now are mostly the ones that never stopped doing the foundational SEO work and added the citation layer on top of it.

The thing I’ve been saying in talks lately is that 2026 is going to be the year a lot of marketing leaders find out their AI visibility isn’t what they assumed it was. The measurement tools exist now in a way they didn’t even nine months ago, so the gap between teams who use them and teams who don’t is about to compound, and the further it compounds the harder it gets to close. The real answer is that I don’t know exactly where this lands by Q4. I do think the citation audit is worth doing this quarter rather than next, partly because the next Gemini upgrade is probably going to shift the numbers again and you’ll want before-and-after data.

If any of this is the kind of problem you’re chewing on for your own brand, Explainablegoes deeper into the patterns AI systems use when deciding which brands to surface and which to ignore, with the kind of frameworks I couldn’t cram into this blog post.

Jarred Smith is the author of Explainable: Why AI Recommends Some Brands & Ignores Others, an Amazon bestseller on AEO, GEO, and SEO. He’s a marketing leader with nearly 20 years of experience across healthcare, public media, retail, and environmental services. Find him at jarredsmith.com.