Your Brand Is Being Judged by Two Different ChatGPTs, and Most Marketers Don't Know Which One

If you've been watching your ChatGPT citation reports the last few weeks and wondering why the numbers look weird, you're not crazy. Something did in fact change, well two things, really, and neither one got the coverage it deserved because the AI search press spent most of April chasing a new PR Newswire reporting product and a flood of vendor launches instead of the thing that most of us care about. Hint: Your Brand. (I guess it’s not really a hint if I just tell you. I never was good at keeping surprises.)

So, what happened? Sometime in early March, OpenAI moved the free ChatGPT experience over to a new default model called GPT-5.3 Instant. A few weeks later, GPT-5.4 Thinking started showing up in more places. From a technical side that sounds like a routine model swap, but if you look into the citation data, it’s pretty obvious that it is now two separate search engines sharing a logo.

I'll get into the numbers in a second, but the short version is that the two models cite 93% different sources on the same prompts (Writesonic, March 2026), so your brand's visibility inside ChatGPT now depends on which ChatGPT the user happens to be talking to. It reminds me of being a kid and asking my mom for something and then asking my dad and getting two completely different responses. Of course, I always chose the response that benefited me (you know you did it too, don’t judge me). And since most brands are measuring one of them, or neither, or treating the whole thing as a single dashboard number, we've got a lot of people confidently reporting AI visibility metrics that don't describe the reality their customers are sitting in.

What the data shows

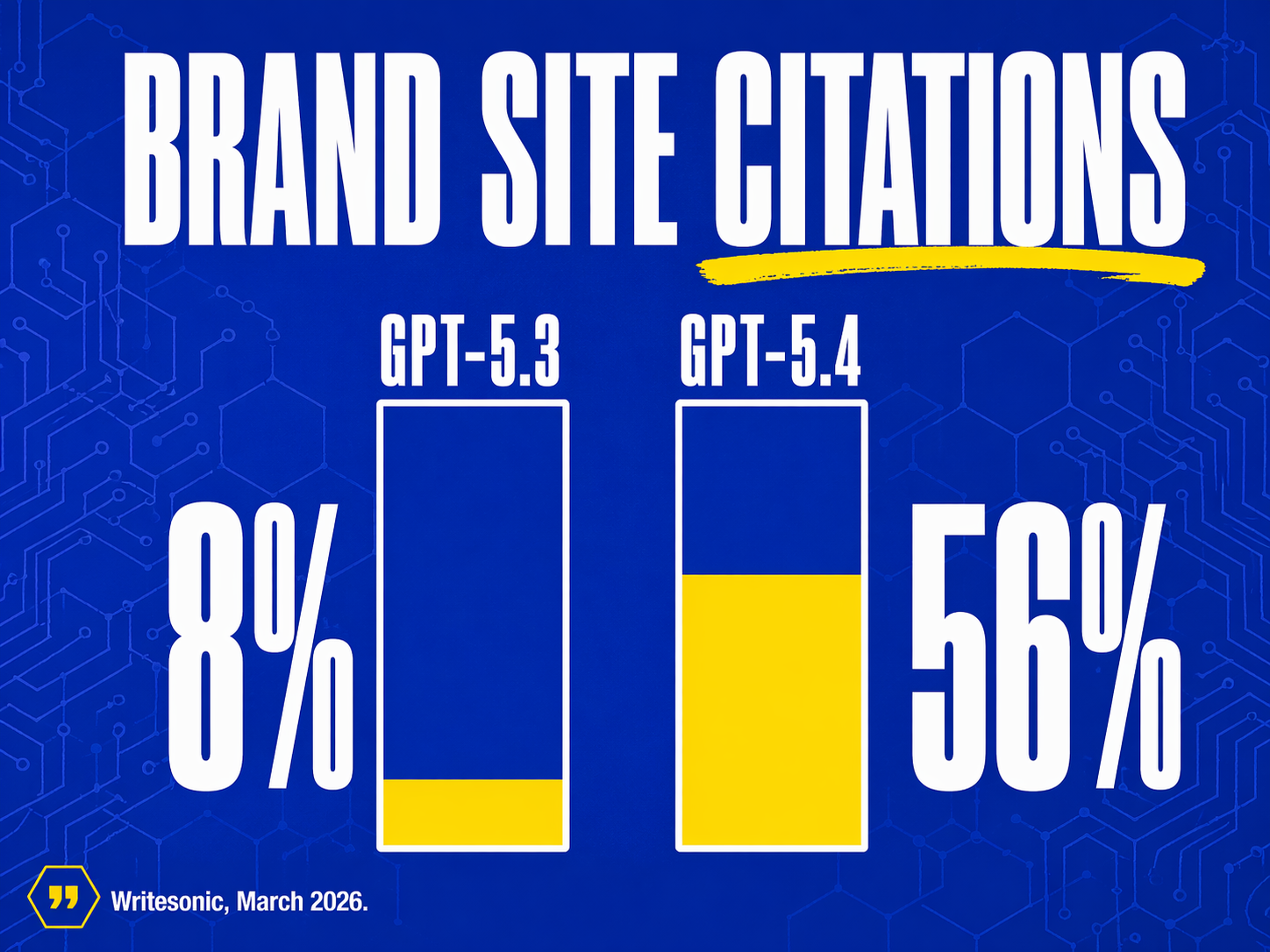

Samanyou Garg at Writesonic ran 119 ChatGPT conversations across 50 prompts and 16 categories, pulling 7,896 web search results and 1,161 citations. The study compared GPT-5.3 Instant (the new default for free users) against GPT-5.4 Thinking. A few findings worth thinking about.

GPT-5.4 cites brand-owned websites 56% of the time.GPT-5.3 cites them 8% of the time. That's a 7x gap between two models running on the same search index, answering the same questions (Writesonic, March 2026). GPT-5.2, the model GPT-5.3 replaced, cited brand sites 22% of the time. So the free default got meaningfully worse for brand-owned content, and the thinking model went in the opposite direction.

Across 50 prompts, the average citation overlap between the two models was 7%. On 22 of those 50 prompts, there was literally zero overlap. Same question, same index, but completely different answers about which sources are worth trusting. Seems suspicious to me, and definitely something marketers need to be aware of.

GPT-5.4 also behaves differently mechanically. It decomposes each prompt into roughly 8.5 sub-queries, and it uses site: operators to query brand domains directly, which is a behavior no previous ChatGPT model used at all. In 50 prompts, GPT-5.4 sent 156 site: queries. That's it looking up your brand on your own website rather than reading what someone else wrote about you.

GPT-5.3, the new free default, is doing the opposite. According to Resoneo's analysis of 27,000 responses over 14 weeks, GPT-5.3 cites about 20% fewer unique domains per response than its predecessor. Average unique domains per response dropped from 19 to 15. Average unique URLs dropped from 24 to 19.

So the free version is citing fewer things, mostly third parties, the thinking version is citing more things, mostly brand sites, and the citation overlap between them is single digits. If you're still treating ChatGPT as one channel, you're averaging two different things and calling it a trend.

The ghost citation problem that might actually be scarier than a real ghost

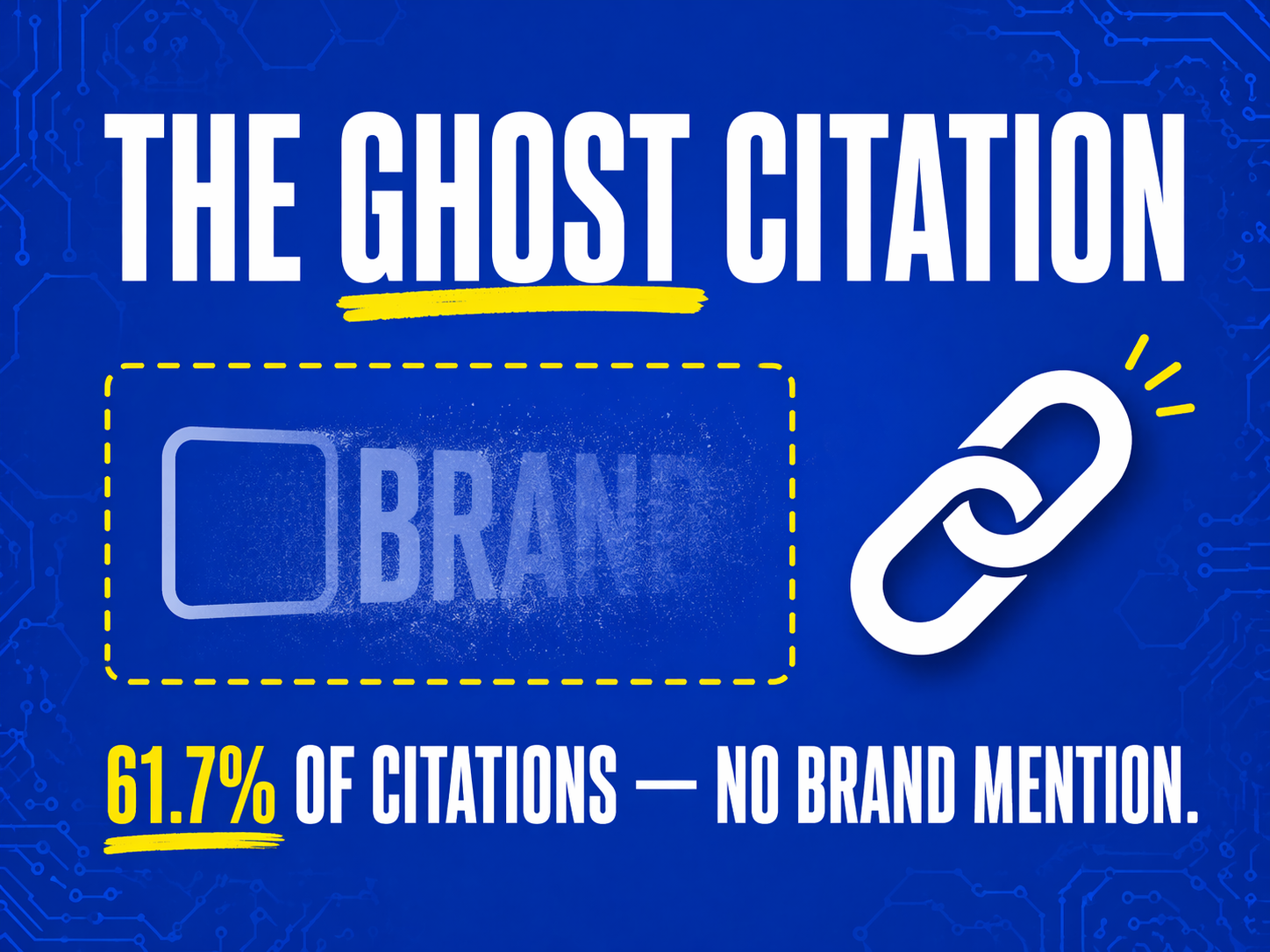

While everyone's been watching the model split, there's a separate data point from Growth Memo in April 2026 that keeps rolling around in the back of my mind. 61.7% of LLM citations are what they're calling ghost citations, where the domain gets a source link but the brand name never appears in the answer text the user reads. Only 13.2% of brand appearances produce both a citation link and a visible mention.

Gemini is the worst offender. It mentions brands in 83.7% of responses but only generates a citation link 21.4% of the time. So most of the time Gemini is name-dropping brands without sending a shred of attribution, and a lot of the rest of the time it's linking to sources without saying whose source it is. Either way, the user doesn't know you exist and somewhere my old college English professor is cringing about lack of citations.

That matters because the whole pitch of AI search visibility, as most agencies are selling it, is that citations translate into brand awareness. A citation without a mention barely does that. A mention with no citation doesn't send traffic and the only thing that actually builds awareness is both at once, and that's happening in about one out of every eight appearances. Not cool.

If you're paying for a GEO or AEO retainer, the number you should be asking your agency for is not citations. You need to directly ask about the co-occurrence rate of citation and mention for your brand, on each model, across your target prompts. If they don't have that number, they're measuring the wrong thing. You can tell them I said that if you want to blame someone.

What used to work and what doesn't anymore

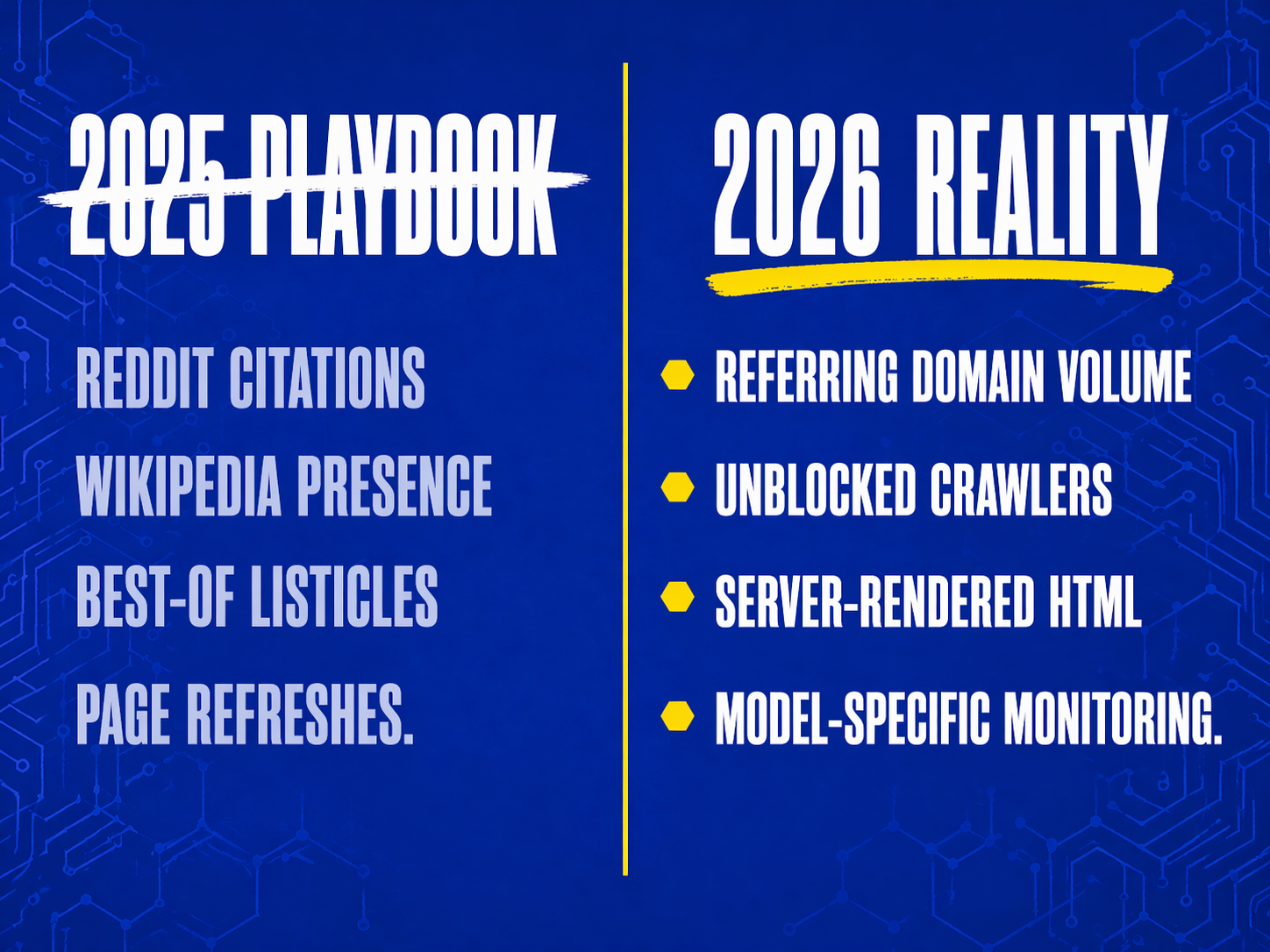

Through late 2025, the playbook most of us were running on AI visibility looked something kind of like the following: You get cited on Reddit, get cited on Wikipedia, get mentioned in a bunch of “best of” listicles on high-DA publishers, and refresh your top pages regularly. That was the advice and worked most for the most part because that is what ChatGPT was doing at the time.

Six months later, the picture looks different.

Reddit is still huge, but wildly unstable (and honestly scary if you creep too far down some rabbit holes). Tinuiti's Q1 2026 AI Citations Trends Report showed that Reddit citation share grew at least 73% from October 2025 to January 2026 across all nine tracked categories, with Perplexity pulling 24% of its January citations from Reddit alone. Good news if you're invested there. The catch is that the variance across platforms is absurd. Reddit accounts for over 5% of ChatGPT citations but only 0.1% of Gemini citations. The same brand can look dominant on one AI and invisible on another, and most monitoring tools are averaging across all of them. My last post had some interesting test results that confirm this.

Reddit also has a recency problem. Profound's analysis found that the average Reddit post cited by AI models in 2025 was posted roughly a year earlier, and 4% of cited posts were from 2019 or older. So if your product shipped a major update in the last eighteen months, there's a really good chance that AI is still recommending against a version of your product that doesn't exist anymore.

Listicles, (Buzzfeed, what a creation you founded…) which were the core of most GEO strategies a year ago, are getting less useful. Seer Interactive found ChatGPT listicle citations dropped 30% between December 2025 and January 2026, and Gemini cut “best of” listicle citations by 40% in April. The playbook of getting your brand added to a Forbes roundup is still better than nothing, but the returns are compressing.

Meanwhile the things that are working aren't the things most agencies are selling. SE Ranking found that referring domains are the single strongest predictor of ChatGPT citations, with a clear threshold effect at 32,000 referring domains. Below that, you're rolling the dice and above it, you're in the pool. That's a backlink problem, not a content problem, and it's the boring long-slog stuff that nobody wants to expense.

What to do about it

A few things, in order of how much they'll move your visibility.

Figure out which ChatGPT your customers are using. Free users are on GPT-5.3 Instant by default, and they outnumber Plus users by an enormous margin. But high-intent buyers, especially in B2B where the purchase decision matters, are disproportionately Plus or Pro users running on GPT-5.4. Your deal-flow prospects and your casual researchers are getting different answers from different models, and the strategy to reach them is different.

For GPT-5.3 visibility, you're optimizing to be the brand that gets mentioned on the third-party publishers that 5.3 still cites. That's classic PR work like earned placements on Forbes, Business Insider, category-specific trade publications, and whatever your industry's equivalent of G2 or Capterra is.

For GPT-5.4 visibility, you're optimizing to be the brand the model picks up via site: operator, which puts the pressure on your own website to answer the question. If someone asks “best commercial lawn care services in my area” and GPT-5.4 does a site:yourcompany.com "commercial lawn services" query, what does it find? Is the answer on a product page? A comparison page? An FAQ? Or is it buried in a PDF that the crawler can't parse well? Server-rendered HTML with clean structure, visible pricing, visible feature comparisons, and real FAQ content is what GPT-5.4 is looking for.

Check your robots.txt today. Don’t wait around for this one. Status Labs found that among eight clients who had inadvertently blocked GPTBot, removing the block produced first citations within 14 to 21 days. I've seen this on a dozen sites where someone in 2023 added a block because of scraping concerns, forgot about it, and the brand has been commercially invisible in AI search ever since. Fifteen minutes of work, measurable results in under a month. If you do nothing else this quarter, do that.

Fix the Wikipedia situation if you have one. Status Labs' data on their own clients showed companies with comprehensive Wikipedia articles hit first ChatGPT citations in an average of 28 days, versus 52 days for companies without one. Wikipedia is also the most cited source in ChatGPT's top 10, at roughly 48% of its top-source share. The hard part is that Wikipedia's notability rules are brutal, and you can't just write your own entry. You have to earn enough independent press coverage that a Wikipedia editor decides you're notable, which is a year-long project, which is why most mid-market brands skip it.

Stop measuring citations as a single number. Start measuring mention-and-citation co-occurrence, by platform, by model. This is the only metric that actually correlates to awareness lift. If your current dashboard shows you a single “AI visibility score,” ask your vendor what that score is composed of and how it handles the ghost citation gap. If the answer is vague, you're looking at a vanity metric.

Accept that this is going to keep moving. Conductor research showed Reddit's sole-source citation rate rose 31% in the same period its overall frequency dropped 50%, meaning when LLMs cite Reddit now, it's increasingly the only thing they're citing. That's an entirely different risk than what most brands are tracking. When Reddit sued Perplexity last October, Perplexity's Reddit citation share dropped 86% overnight, with YouTube filling the gap. Citation graphs can break in a day.

Where the vendor pitches are off

A lot of what's being sold right now under the GEO and AEO banner is content work playing dress up in new vocabulary: write better FAQ sections, add schema markup, publish more guides. Some of that matters, but we all know that none of it is new. What's driving citation outcomes in 2026 is a mix of things that are either boring (backlinks, Wikipedia, clean HTML, unblocked crawlers) or unpleasant (platform-by-platform auditing, multi-model monitoring, accepting that your brand has two ChatGPT footprints and needs a different strategy for each).

If your agency's pitch deck for 2026 looks a lot like their 2025 deck with a bunch of acronyms swapped, you're paying someone to not do the work. The brands that will get cited by name in ChatGPT answers next year are the ones doing the boring plumbing this year, and the ones that won't are still running the 2024 content playbook and hoping nobody notices.

Jarred Smith is the author of Explainable: Why AI Recommends Some Brands & Ignores Others, an Amazon bestseller on AEO, GEO, and SEO. He's a marketing leader with nearly 20 years of experience across healthcare, public media, retail, and environmental services. Find him at jarredsmith.com.