Are AI Searches Biased Toward You? What Memory, Location, and Personalization Actually Do to Your Answers

One question I keep getting that AEO/GEO people haven't handled well, is when I ask an AI search tool something, how much of the answer is based on what the model knows about me?Not the topic, but what it knows about me from past chats, my location, my job, how old I am, brands I've mentioned, and so on.

I don’t think the industry has a clean answer to that yet, so this post is me working through what I can demonstrate, what I strongly suspect, and what it means if you’re trying to get cited in AI search.

The simple version of the question:

If you and I both type the same prompt into ChatGPT today, do we get the same answer? Probably not, and the gap between our answers is the part of AI search that nobody is really pricing in.

We’ve accepted that traditional Google has done localized and personalized results for years. However, there’s a difference between Google ranking a local plumber higher because you’re in Tulsa and an AI engine writing a summary that pulls from the fact that you mentioned, eight months ago, that you prefer plain language over jargon. One is a sort; the other is a rewrite, and I think we’ve been treating them as the same thing.

What ChatGPT does today

OpenAI is the most transparent of the major players about this, so let’s start there. Per their own memory documentation, ChatGPT now operates with two layers of personalization that are on by default for paid users:

Saved memories

Things you’ve explicitly told it to remember, or that it decided were worth keeping. These are persistent until you delete them.

Reference chat history

It pulls “useful information” from your past conversations even when you didn’t ask it to remember anything. I like to refer to this as “the sleeper”.

The chat history layer is the interesting one because it’s not a bullet list you can audit. It’s a fuzzy, evolving sense of who you are based on every conversation you’ve had. OpenAI describes it as letting ChatGPT “learn about your interests and preferences,” which is a polite way of saying the model is building a profile and consulting that profile when it answers you.

If you turn off “Reference chat history,” ChatGPT won’t pull from past conversations going forward. There’s a quieter detail in the docs that I think people miss, though, which is that turning saved memories off doesn’t delete what’s already there. The file stays where it is; you just stop reading from it.

As of their January 2026 release notes, ChatGPT’s ad-supported tier also uses your past chats and memories for ad personalization, which means the same profile that shapes your answers is now shaping which of those answers happen to be sponsored.

What about Incognito or Temporary Chat?

People assume Temporary Chat or Incognito mode gives them a clean room view of how the engine answers questions, and it mostly doesn’t.

ChatGPT’s Temporary Chat mode does skip memory in both directions, meaning that it won’t reference your existing memories, and it won’t write new ones. That part is true, and if you want to test how an AI engine answers a question without your fingerprints all over it, Temporary Chat is the closest thing to a fair test you’ve got inside ChatGPT.

But there are a few things Temporary Chat doesn’t reset:

Your account exists and the session is still tied to a logged-in user

Your IP address, and therefore your approximate location, still rides along

Any account-level settings (your custom instructions, your selected language, your subscription tier, the model you’re using) still apply

Browser-level signals, cookies, and any A/B tests OpenAI has you bucketed into are still there

Temporary Chat strips the deepest personalization layer but it doesn’t give you a view of “what does the model say to a stranger.” If you want to really test that, you need to create a fresh account on a different device on a different network, and even then you’ve still given OpenAI your location, an age range, a phone number, and a payment method during signup.

Perplexity is the most explicit about it

Perplexity is interesting (or should I say perplexing) because they put the personalization right on the tin. In their account settings documentation, the Personalization page asks you for:

Your occupation

Your company name

Your date of birth

Your gender (optional)

Custom instructions (a free-text “tell us about yourself”)

Whether to share your precise location

And they’re upfront that “responses will be based on your network location” if you don’t set one. Meaning, even if you don’t tell Perplexity you’re in Oklahoma City, it’s already approximating it from your IP.

I actually respect Perplexity for being upfront about this rather than burying it in a privacy policy. But it does mean every Perplexity answer you’ve ever gotten has been shaped, at least a little biy, by what they’ve inferred about your role, your location, and what you’ve asked before. An engineer in Oklahoma asking about wastewater compliance is going to get a different answer than a marketing director in Toronto asking the exact same prompt. Good to know, right?

Google AI Overviews and AI Mode

Google is the murkiest case because they’ve been personalizing search results based on location and history for fifteen-plus years. AI Overviews and AI Mode inherit that whole apparatus including your search history, your YouTube watch history if you’re signed in, your location, your device, whether you’ve recently clicked into a particular publisher, the works.

The Google result, in other words, was already biased toward you long before AI Overviews showed up. The summary at the top is doing the same personalization the underlying ranker has been doing for years, except it’s writing the answer for you instead of just sorting links.

So yes, AI searches are biased toward you

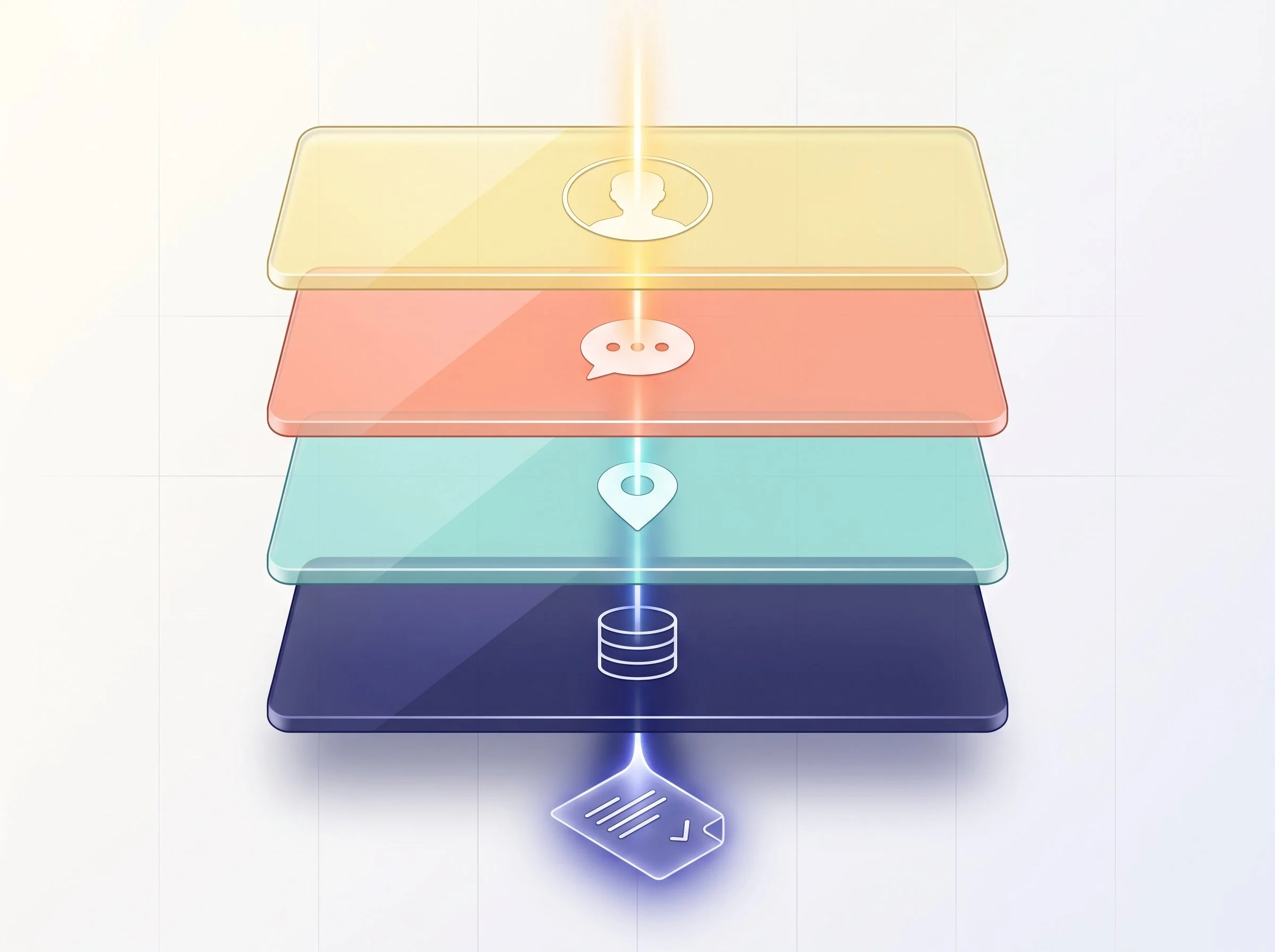

The answer to the original question is yes, with a few layers I’d separate out so we can talk about them clearly.

Explicit Personalization

What you’ve told the engine directly, including your bio, your custom instructions, and your saved memories. This is the easiest layer to audit with the most user control.

Implicit Personalization

What the engine has learned about you from your behavior, with things like past conversations, past clicks, things you’ve asked about repeatedly (surely you know how many teaspoons equal a tablespoon by now, right?). This is the one that’s hardest to see, because there’s no list anywhere you can pull up and review. The model has built a vibe of who you are, and that vibe leaks into every answer.

Environmental Signals

Your location, your device, your time of day, your language, your account tier. This one isn’t strictly personal but they hitch a ride with you everywhere.

Training-time Bias

This is the layer the user can’t touch and it’s what we usually mean when we say “AI bias.” The model was trained on certain sources, weighted certain voices, and built certain associations long before you ever showed up. That bias is the same for everyone using the same model, but it’s still bias, and I think it’s something to consider when researching this.

When somebody asks “is AI search biased,” that fourth layer is usually what they have in mind. The day-to-day question for marketers is really what layers one through three are doing to the answers your customers see about your category, and I haven’t seen many people working on that one yet.

Why this matters if you’re trying to get cited

This is where I think the AEO/GEO conversation gets more interesting.

If three different people in three different roles ask the same question about your industry, they may get three different answers, and your brand may show up in one of them and not the others. The mechanism that decided which one wasn’t your authority score, your domain rating, or your structured data. It was the user’s profile.

This is a problem most AEO advice doesn’t talk about. The standard playbook says to write clearly, structure your content for extractability, use schema, build authority through citations and mentions, and aim for the language an LLM is going to pick up. That still holds up (mostly), but it assumes a single user asking a single question with a single right answer, which isn’t the reality that AI search runs in.

Here’s A few things I think can help

Test your prompts from multiple profiles

Don’t audit your AI visibility from your own account. You’re the most personalized person in your own data. Instead, run the test queries from a fresh account on a different network, ideally a different city if you can swing a VPN, and compare the variance to what you see from your usual desk. The gap between the two will tell you how much your normal audit is being flattered by the engine knowing you.

Stop optimizing for a single answer

If three roles get three answers, your job isn’t to win “the” answer. Instead, reframe that thinking to making sure you’re present across the realistic spread of answers your buyers might see. That means writing for the engineer, the procurement lead, and the C-suite version of the same question and not picking one and hoping it lands.

Watch your geographic mix

Location is the personalization signal that bites first. If you sell across regions, your AI visibility is going to be uneven by city in ways you probably can’t see from your desk, so build a few region-specific test profiles into whatever audit cadence you’re running.

Treat custom instructions as a market

A real and growing share of users now have written instructions like “I work in healthcare, give me clinical sources” or “I’m a marketing director, prioritize practitioner blogs over academic papers.” Those instructions are routing real traffic and real attention. You don’t get to write them, but you should be thinking about which custom-instruction profiles your content is going to get pulled into, and which ones it isn’t.

Don’t trust your own audit

This is the one I want most people to take seriously. If you ran an AEO audit last quarter from your own laptop, on your own account, on your own home Wi-Fi, you got a personalized answer about your own brand, and you probably saw yourself more than a stranger would. Run it again from a profile that doesn’t know you.

Where I land

AI search is not a neutral utility, and I don’t think it ever will be. The whole product proposition of these engines is that they get to know you and answer better because of it, which means the personalization is baked into the value prop. Asking the engine to be impersonal is asking it to be worse at the job it’s being judged on.

The more useful work, instead of fighting the personalization, is figuring out which layers are doing what to your visibility and testing against them with some honesty. The brands that figure out how to be present across a spread of personalized answers, instead of optimizing for the one they happen to see from their own desk, are going to win the next round of AI search the same way the brands that figured out localized SEO won the last one.

I get into the mechanics of why some brands consistently get pulled into AI answers and others don’t in Explainable. Personalization is one piece of the puzzle, but a lot of it comes back to what the model has decided your brand is for in the first place, which is a story I’ll save for another post.

Jarred Smith is the author of Explainable: Why AI Recommends Some Brands & Ignores Others, an Amazon bestseller on AEO, GEO, and SEO. He’s a marketing leader with nearly 20 years of experience across healthcare, public media, retail, and environmental services. Find him at jarredsmith.com.